Are you ready to explore the intriguing world of AI Voice Deepfake? Imagine the possibilities of technology seamlessly mimicking the human voice, blurring the lines between reality and illusion. With the rapid advancements in deep learning algorithms, AI Voice Deepfake has emerged as a captivating phenomenon. But how exactly does it work? And what are the implications for various industries, particularly entertainment? Join us on this captivating journey as we uncover the intricacies of AI Voice Deepfake and delve into its potential impact on our lives.

Contents

- 1 What is Ai Voice Deepfake?

- 2 The Rise of AI Voice Deepfake

- 3 Understanding Deep Learning Algorithms

- 4 How AI Voice Deepfake Works

- 5 Realistic Voice Imitations With AI

- 6 Applications in Entertainment Industry

- 7 AI Voice Deepfake in Advertising and Marketing

- 8 Enhancing Cybersecurity With AI Voice Deepfake

- 9 The Threat of Identity Theft

- 10 Combating Misinformation and Fake News

- 11 Ethical Concerns and Privacy Issues

- 12 Legal Implications of AI Voice Deepfake

- 13 The Future of AI Voice Deepfake

- 14 Protecting Yourself From AI Voice Deepfake

- 15 Conclusion: Navigating the Complexities of AI Voice Deepfake

- 16 Frequently Asked Questions

- 16.1 How Can AI Voice Deepfake Technology Be Used in the EntertAInment Industry?

- 16.2 What Are the Ethical Concerns and Privacy Issues Related to AI Voice Deepfake?

- 16.3 How Can AI Voice Deepfake Technology Enhance Cybersecurity?

- 16.4 What Are the Legal Implications of AI Voice Deepfake?

- 16.5 How Can Individuals Protect Themselves From AI Voice Deepfake Attacks?

- 17 Conclusion

What is Ai Voice Deepfake?

AI voice deepfake refers to the use of artificial intelligence technology, particularly deep learning algorithms, to manipulate or generate human-like voices in audio recordings. Similar to other forms of deepfake technology, which can create convincing fake images and videos, AI voice deepfake aims to mimic or impersonate a specific person’s voice with remarkable accuracy.

The process typically involves training a deep learning model on large datasets of audio recordings of the target individual’s voice. These models analyze the patterns, nuances, and characteristics of the voice, allowing them to generate new audio clips that sound like the target person speaking.

AI voice deepfake technology has various potential applications, including in the entertainment industry for dubbing and voice acting, in virtual assistants to create more natural-sounding interactions, and in accessibility tools for individuals with speech disabilities. However, it also raises concerns about the potential for misuse, such as creating fake audio recordings for malicious purposes, impersonating individuals for fraud or social engineering, or spreading misinformation through fabricated recordings of public figures. As with other forms of deepfake technology, the ethical and legal implications of AI voice deepfakes are subject to ongoing debate and scrutiny.

The Rise of AI Voice Deepfake

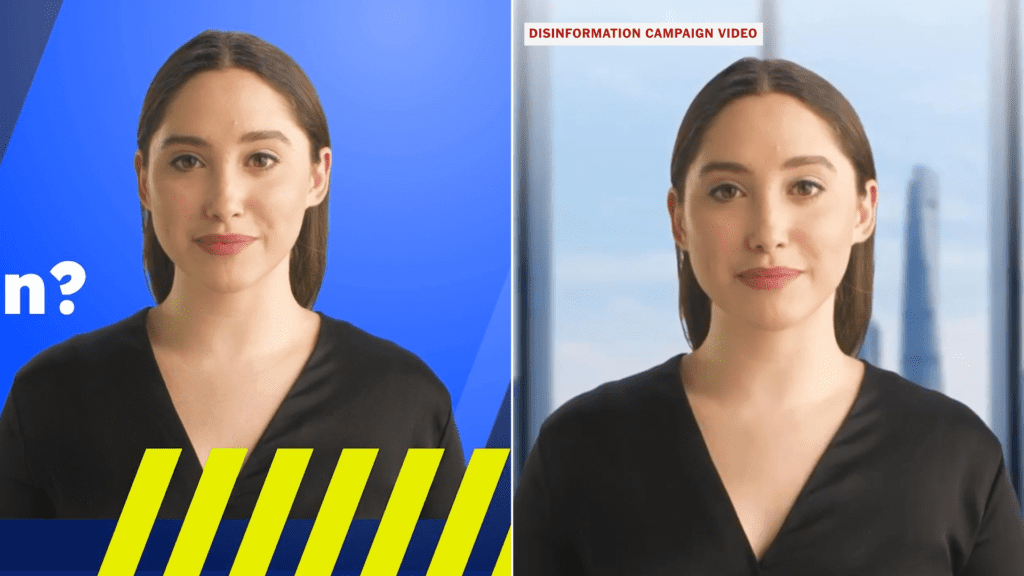

The rise of AI voice deepfake technology has sparked significant concern and debate regarding its potential implications for privacy and security. Deepfake technology, which uses artificial intelligence to manipulate or create realistic audio and video content, has evolved rapidly in recent years. While initially used for entertainment purposes, such as creating viral videos and impersonations, it has raised serious questions about its potential misuse.

One of the main implications of deepfake technology is the erosion of trust in audio recordings. With the ability to fabricate convincing voice recordings, it becomes increasingly difficult to distinguish between real and manipulated content. This poses a threat to individuals’ privacy as their voices can be easily replicated and used to spread false information or engage in fraudulent activities. Furthermore, this technology could be exploited by malicious actors to impersonate others, leading to reputational damage and potential legal ramifications.

Another concern is the impact of AI voice deepfake technology on national security. As the technology becomes more sophisticated, it could be used to deceive individuals in positions of power, such as government officials or business leaders. This opens up possibilities for espionage, political manipulation, and even the destabilization of institutions. The potential for deepfakes to be used as a tool for propaganda or disinformation campaigns is also a significant worry.

While there are undoubtedly negative implications associated with AI voice deepfake technology, it is important to acknowledge that it also has potential positive applications. For instance, it could be utilized in the entertainment industry to create more realistic voiceovers or enhance virtual reality experiences. However, the risks and implications must be carefully considered to ensure that this technology is used responsibly and ethically. Robust regulations and safeguards should be implemented to mitigate the potential harm caused by AI voice deepfakes.

Understanding Deep Learning Algorithms

With the rise of AI voice deepfake technology and its potential implications, it becomes crucial to understand the underlying mechanisms of deep learning algorithms. Deep learning algorithms are a subset of machine learning algorithms that are inspired by the structure and function of the human brain. These algorithms leverage neural network architectures to process and learn from vast amounts of data, enabling them to perform complex tasks such as image recognition, natural language processing, and voice synthesis.

Understanding deep learning algorithms begins with grasping the concept of neural network architectures. Neural networks are composed of interconnected nodes called neurons, which are organized in layers. The input layer receives data, which is then processed through a series of hidden layers before reaching the output layer. Each neuron in the network performs a simple mathematical operation on the input and passes the result through an activation function, which determines its output.

One commonly used neural network architecture is the convolutional neural network (CNN). CNNs are particularly effective for image and video analysis tasks due to their ability to capture spatial relationships in data. Another popular architecture is the recurrent neural network (RNN), which excels in tasks involving sequential data, such as natural language processing and speech recognition.

Deep learning algorithms learn by adjusting the weights and biases of the connections between neurons through a process known as backpropagation. This iterative process involves calculating the error between the predicted and actual outputs and using that information to update the network’s parameters. The algorithm continues to iterate until it reaches a point where the error is minimized, indicating that the network has learned the underlying patterns in the data.

Understanding deep learning algorithms is essential for recognizing the potential vulnerabilities and limitations of AI voice deepfake technology. By comprehending the inner workings of these algorithms, researchers and developers can develop robust countermeasures to combat the misuse of this technology and ensure its responsible deployment in society.

How AI Voice Deepfake Works

To understand how AI voice deepfake works, it is important to explore the technology behind it, the ethical concerns and implications it raises, and the methods used for detection and prevention. By analyzing the underlying algorithms and techniques, you can gain insight into the process of creating fake voices that are convincingly realistic. Considering the ethical implications and potential misuse of this technology, it becomes crucial to develop effective methods for detecting and preventing the spread of AI voice deepfakes.

Technology Behind Voice Deepfake

How does AI technology enable the creation of voice deepfakes? The technology behind voice deepfake involves the use of deep learning algorithms to manipulate and generate realistic human speech. Deepfake voice technology utilizes voice manipulation techniques to analyze an individual’s voice patterns, intonation, and pronunciation. Through this analysis, AI models are trained to mimic the unique characteristics of a person’s voice. These models are then able to generate speech that sounds like the target individual, even if they never spoke those words. The process involves training the AI models on a large dataset of audio recordings, allowing them to learn and replicate the various nuances and idiosyncrasies of the target voice. By leveraging AI technology, voice deepfake applications can produce highly convincing imitations, posing challenges in the realm of authenticity and trustworthiness.

Ethical Concerns and Implications

AI voice deepfake technology raises significant ethical concerns and implications due to its potential to manipulate and deceive through the creation of highly convincing imitations of human speech. These ethical considerations stem from the fact that AI voice deepfakes can be used to spread misinformation, impersonate individuals, and potentially harm reputations. The ability to mimic someone’s voice with such precision raises concerns about privacy and consent, as individuals can have their voices replicated without their knowledge or permission. Moreover, the societal impact of AI voice deepfakes is worrisome, as they can be used to create fake audio evidence that can be used in legal proceedings or to manipulate public opinion. As this technology advances, it is crucial for policymakers and society as a whole to address these ethical concerns and establish regulations to mitigate potential harm.

Detection and Prevention Methods

Detection and prevention methods for AI voice deepfakes involve utilizing advanced algorithms and machine learning techniques to identify and analyze audio data for signs of manipulation or synthetic generation. Understanding the technology behind AI voice deepfakes is crucial in developing effective detection and prevention methods. By examining the unique characteristics of human speech, such as intonation, pitch, and phonetic patterns, algorithms can compare the audio data against a database of known genuine voices to identify discrepancies. Additionally, machine learning algorithms can be trained using real-world case studies of AI voice deepfakes to improve their accuracy in detecting manipulated or synthetic audio. These methods aim to provide a reliable defense against the increasing threat of AI voice deepfakes, ensuring the integrity and trustworthiness of audio content.

Realistic Voice Imitations With AI

Realistic voice imitations achieved through the use of AI technology have garnered significant attention in recent years. This advancement in AI has led to various real-time applications and has implications for voice authentication.

AI-powered voice imitation technology has made significant strides in creating highly realistic imitations of human voices. This has opened up a wide range of possibilities for real-time applications. For instance, in the entertainment industry, AI voice imitations can be used to replicate the voices of famous actors or singers for dubbing or vocal performances. This technology can also be used in virtual assistants or chatbots to provide more natural and human-like interactions.

Voice authentication, a method used to verify a person’s identity based on their unique vocal characteristics, has also benefited from realistic voice imitations with AI. By using AI-generated voice imitations, fraudsters can potentially bypass voice authentication systems, leading to security concerns. For instance, an attacker could use AI technology to imitate someone’s voice and gain unauthorized access to sensitive information or perform fraudulent activities.

However, it is important to note that while AI voice imitations have the potential for misuse, there are also efforts being made to develop robust detection and prevention methods. Researchers are working on improving voice authentication systems to detect AI-generated imitations and differentiate them from genuine human voices. These advancements aim to mitigate the risks associated with realistic voice imitations and ensure the integrity of voice-based authentication systems.

Applications in Entertainment Industry

The development of realistic voice imitation technology has not only led to advancements in voice authentication, but it has also found valuable applications in the entertainment industry. One of the significant areas where this technology is making an impact is the music industry. With AI voice deepfake, artists can now recreate the voices of famous singers or even mimic the vocal style of their favorite artists. This opens up a world of possibilities for musicians, allowing them to experiment with different vocal styles and collaborate with virtual versions of iconic singers. Furthermore, AI voice deepfake can also be used in the production of voiceovers for movies, TV shows, and video games. This technology enables voiceover actors to lend their voices to characters without the need for extensive recording sessions. It can save time and resources, making the voiceover process more efficient. Additionally, AI voice deepfake can be used to preserve the voices of actors and singers who are no longer able to perform due to age or illness. By using their previous recordings, AI can recreate their voices, allowing them to continue contributing to the entertainment industry. While there are concerns about the ethical implications of AI voice deepfake in the entertainment industry, such as the potential for fraudulent use or the loss of job opportunities for human voice actors, its applications offer new creative possibilities and efficiencies in music production and voiceover work.

AI Voice Deepfake in Advertising and Marketing

AI voice deepfake technology has gained significant traction in the advertising and marketing industry, revolutionizing the way brands communicate with their audiences. As the technology continues to advance, it presents both opportunities and challenges for advertisers and marketers.

One of the key applications of AI voice deepfake in advertising and marketing is its potential to create personalized and targeted campaigns. By using AI algorithms to analyze consumer data, brands can generate voiceovers that closely mimic the voice of their target audience. This level of personalization can enhance the effectiveness of advertising messages, as consumers are more likely to engage with content that resonates with them on a personal level.

However, the use of AI voice deepfake in advertising and marketing also raises ethical concerns. The ability to replicate someone’s voice with near-perfect accuracy raises questions about consent and authenticity. Consumers may feel deceived if they discover that the voice behind a brand’s message is actually an AI-generated deepfake. This can lead to a loss of trust and credibility for the brand.

Another potential impact of AI voice deepfake in advertising and marketing is its influence on consumer behavior. Research has shown that voice plays a crucial role in shaping perceptions and emotions. By utilizing AI voice deepfake technology, advertisers can create persuasive and compelling messages that can sway consumer preferences and purchasing decisions. This has the potential to greatly impact the effectiveness of advertising campaigns and drive consumer behavior.

Enhancing Cybersecurity With AI Voice Deepfake

Enhancing cybersecurity can be achieved through the utilization of AI voice deepfake technology. As technology advances, so do the methods employed by cybercriminals. From phishing scams to impersonation attacks, individuals and organizations face an increasing risk of falling victim to cyber threats. AI voice deepfake technology offers a potential solution to this growing problem.

By analyzing and detecting patterns in human speech, AI voice deepfake technology can identify and flag malicious attempts to deceive individuals. It can help in identifying and preventing social engineering attacks, where cybercriminals impersonate someone else’s voice to gain access to sensitive information. This technology can also be utilized to authenticate voices and create secure voice recognition systems, enhancing the overall security of various applications.

However, the implementation of AI voice deepfake technology also raises ethical implications and concerns regarding privacy. While this technology can be used for legitimate purposes, there is a risk of it being misused for fraudulent activities. For instance, criminals could create convincing voice deepfakes to bypass voice authentication systems or manipulate audio recordings to deceive individuals.

To mitigate these risks, it is crucial to establish robust regulations and guidelines for the ethical use of AI voice deepfake technology. Additionally, organizations should prioritize the protection of privacy by ensuring that individuals’ consent is obtained before their voices are used or manipulated. Transparency and accountability in the use of this technology are essential to maintain trust and safeguard against potential misuse.

The Threat of Identity Theft

With the rise of AI voice deepfake technology, the increasing threat of identity theft looms large in the realm of cybersecurity. As this technology becomes more sophisticated, it poses serious challenges to enhancing personal security and protecting individuals from falling victim to identity theft.

AI voice deepfakes have the potential to deceive individuals by mimicking their voice and creating a convincing replica. This can be used to manipulate others into providing sensitive personal information or to impersonate individuals for malicious purposes. The psychological impact of such identity theft can be significant, causing emotional distress, financial loss, and damage to one’s reputation.

Identity theft can have far-reaching consequences, impacting not only individuals but also businesses and society as a whole. Financial institutions, for example, may face an increase in fraudulent activities as criminals exploit AI voice deepfakes to gain unauthorized access to accounts or make fraudulent transactions. Additionally, the trust between individuals and institutions may erode, leading to a loss of confidence in the digital landscape.

To address this threat, it is crucial to enhance personal security measures and develop robust authentication methods that can detect and prevent AI voice deepfakes. Implementing two-factor authentication, using biometric identifiers, and regularly monitoring for suspicious activities are some ways to mitigate the risks associated with identity theft.

Furthermore, raising awareness about AI voice deepfakes and educating individuals about the signs of potential identity theft can help individuals protect themselves and remain vigilant. Collaboration between technology companies, cybersecurity experts, and law enforcement agencies is also essential to stay ahead of cybercriminals and develop effective strategies to combat this evolving threat.

Combating Misinformation and Fake News

To combat misinformation and fake news, it is crucial to develop effective methods for detecting AI-generated content. This involves implementing advanced algorithms and machine learning techniques to identify subtle signs of manipulation. Additionally, educating the public about the prevalence and potential dangers of fake news can help individuals become more discerning consumers of information, thereby reducing the impact of misinformation on society.

Detecting Ai-Generated Content

Detecting AI-generated content is crucial in combating misinformation and fake news, as it allows for the identification and removal of deceptive content from various platforms. With the rapid advancements in technology, AI-generated content has become more sophisticated and harder to detect. However, efforts are being made to develop robust detection methods to protect users from falling victim to misinformation. These methods often rely on the analysis of patterns and inconsistencies in the content, as well as the use of machine learning algorithms. One challenge in detecting AI-generated content is striking a balance between protecting privacy and identifying deceptive content. Privacy concerns arise as detection methods often involve analyzing user data, raising ethical questions that need to be addressed. Nonetheless, ongoing research and collaboration between technology experts, policymakers, and platforms can help develop effective solutions that combat AI-generated content while safeguarding privacy.

Educating the Public

One effective approach to combating misinformation and fake news is through educating the public about the dangers and techniques used to manipulate information. Educational campaigns and public awareness initiatives play a crucial role in equipping individuals with the necessary skills to discern between authentic and manipulated content. By providing the public with knowledge about the various methods employed in spreading false information, such as AI-generated deepfake voices, people can better identify and critically evaluate the information they encounter. Educational campaigns can include workshops, online resources, and media literacy programs that teach individuals how to fact-check and verify sources. By empowering the public with these skills, they can become more resilient to the influence of misinformation and actively contribute to a more informed and discerning society.

Ethical Concerns and Privacy Issues

Ethical concerns and privacy issues arise when considering the implications of AI voice deepfake technology. While AI voice deepfakes have the potential to revolutionize industries such as entertainment and digital communication, they also raise serious questions about the boundaries of consent, trust, and personal privacy.

One of the primary ethical concerns surrounding AI voice deepfakes is the potential for misuse and deception. With the ability to generate highly realistic voice imitations, there is a risk of individuals being deceived or manipulated. For example, someone’s voice could be replicated without their consent and used to spread false information or commit fraud. This raises questions about the responsibility of individuals and organizations to verify the authenticity of the voices they encounter.

Privacy issues also come to the forefront when discussing AI voice deepfakes. The technology relies on vast amounts of data, including voice recordings, to create realistic imitations. This raises concerns about data privacy and the potential for unauthorized access and misuse of personal information. Companies and individuals who collect and store voice data need to prioritize robust security measures to protect individuals from potential harm.

Additionally, there is a broader societal concern regarding the erosion of trust. As AI voice deepfakes become more advanced and widespread, it may become increasingly difficult to discern between real and manipulated voices. This could lead to a general sense of skepticism and doubt in digital communications, undermining the trust we place in the authenticity of human voices.

Legal Implications of AI Voice Deepfake

The legal implications of AI voice deepfake technology are multifaceted and require careful consideration. The advancement of this technology raises significant ethical implications and legal consequences. While AI voice deepfake technology can be used for harmless entertainment purposes, such as creating voice impressions for movies or video games, it also has the potential to be misused for malicious intent.

One of the primary legal concerns surrounding AI voice deepfakes is the issue of consent. Creating a deepfake of someone’s voice without their permission raises questions of privacy and personal rights. The use of someone’s voice without their consent can lead to defamation, identity theft, or even fraud. As a result, legal systems need to adapt to address these new challenges and provide adequate protection for individuals.

Another legal implication of AI voice deepfakes is the potential for misinformation and manipulation. With the ability to replicate someone’s voice, individuals can be deceived into believing false information or engaging in actions they would not otherwise do. This poses a threat to public trust and can have serious consequences in various fields, including politics, business, and journalism. Legislation must be developed to combat the spread of malicious deepfake audio content and hold those responsible accountable.

Furthermore, AI voice deepfakes present challenges in legal proceedings. As technology becomes more sophisticated, it becomes increasingly difficult to discern between real and fake audio evidence. This raises concerns about the authenticity and reliability of recorded conversations or phone calls, potentially leading to wrongful convictions or the dismissal of genuine evidence. Legal systems must find ways to verify the authenticity of audio recordings and ensure their admissibility in court.

The Future of AI Voice Deepfake

As we look ahead to the future of AI voice deepfake, there are several key points to consider. Firstly, the ethical implications of this technology raise concerns about the potential for misuse and manipulation. Secondly, technological advancements will likely continue to enhance the capabilities and realism of AI voice deepfake, making it even more difficult to detect. Lastly, legal considerations must be taken into account to ensure appropriate regulations are in place to address the potential harm caused by AI voice deepfake.

Ethical Implications

With the rapid advancements in AI technology, the growing concern over the potential ethical implications surrounding the future of AI voice deepfake cannot be ignored. The emergence of AI voice deepfake technology presents significant ethical dilemmas and societal implications. On one hand, this technology can be utilized for positive purposes, such as improving accessibility for individuals with speech impairments or preserving the voices of loved ones. However, there are also troubling possibilities. AI voice deepfake could be misused for fraudulent activities, such as impersonating someone’s voice for financial gain or spreading misinformation through manipulated audio recordings. Additionally, the potential for AI voice deepfake to undermine trust and authenticity in various domains, including journalism and criminal investigations, raises serious ethical concerns. It is crucial to carefully navigate the ethical landscape of AI voice deepfake to ensure its responsible and beneficial use in society.

Technological Advancements

Technological advancements in AI voice deepfake are shaping the future of this rapidly evolving technology. The rise of deep learning has played a significant role in enhancing the capabilities of AI voice deepfake systems. Deep learning algorithms, with their ability to analyze vast amounts of data and learn patterns, have enabled the creation of more realistic and convincing voice forgeries. This has raised concerns about the potential misuse and ethical implications of technological advancements in AI voice deepfake. The ability to generate highly realistic fake voices can be exploited for various malicious purposes, such as impersonation, fraud, or spreading misinformation. As AI voice deepfake technology continues to advance, it becomes crucial to establish robust safeguards and regulations to mitigate the potential risks and protect individuals from the detrimental effects of voice forgery.

Legal Considerations

Legal considerations regarding the future of AI voice deepfake are crucial in order to address the potential risks and ethical concerns associated with this technology. As AI voice deepfake technology advances, it is important to understand the legal ramifications it may have. One of the key concerns is the violation of data privacy. AI voice deepfake relies on large amounts of data, including voice recordings of individuals. This raises questions about consent, ownership, and potential misuse of personal information. There is a need for clear regulations to protect individuals’ privacy rights and ensure that their voices are not used without their knowledge or permission. Additionally, legal frameworks should establish liability for the creation and dissemination of malicious deepfake content, holding accountable those who use this technology for harmful purposes. Striking the right balance between technological innovation and legal safeguards is essential for the responsible development and deployment of AI voice deepfake.

Protecting Yourself From AI Voice Deepfake

To protect yourself from AI voice deepfakes, it is essential to stay vigilant and adopt precautionary measures. As AI algorithms continue to improve, the potential for malicious actors to create convincing voice forgeries becomes a growing concern. These deepfakes can have a significant psychological impact on individuals and society as a whole.

One way to protect yourself is to be cautious about sharing personal information online. Limit the amount of personal data you disclose on social media platforms, as this information can be used to create more realistic deepfakes. Additionally, be mindful of the permissions you grant to voice-related apps and services, as they may have access to your voice recordings which could potentially be exploited.

Another precautionary measure is to stay informed about the latest advancements in AI voice technology. By understanding how these algorithms work, you can better identify potential red flags or anomalies in voice recordings. It is also important to be skeptical of unsolicited voice messages or calls, especially when they involve sensitive or confidential information.

Furthermore, consider using multifactor authentication (MFA) for sensitive accounts. MFA adds an extra layer of security by requiring additional verification steps, such as a fingerprint or a unique code sent to your mobile device. This can help prevent unauthorized access to your accounts, even if your voice is replicated.

Lastly, if you suspect that you have been targeted by an AI voice deepfake, report it to the appropriate authorities or platforms. They can investigate the issue and take necessary action to mitigate the potential harm.

As you navigate the complexities of AI voice deepfake, it is important to consider the ethical implications that arise from this technology. The ability to manipulate someone’s voice raises concerns about privacy, consent, and misinformation. Additionally, detection and prevention methods are crucial in order to mitigate the risks associated with AI voice deepfake, ensuring that individuals and organizations are not deceived by malicious actors. By addressing these points, we can strive towards a more informed and responsible use of AI voice technology.

Ethical Implications

Navigating the complexities of AI voice deepfake brings to light a myriad of ethical implications. The ability to recreate someone’s voice with such accuracy raises concerns about privacy and consent. One of the main ethical concerns is the potential for misuse of AI voice deepfake technology. Unauthorized use of someone’s voice could lead to defamation, harassment, or even identity theft. Furthermore, AI voice deepfake has the potential to manipulate public opinion and spread misinformation on a large scale. Privacy concerns also arise as individuals’ voices can be easily replicated without their knowledge or consent. This can lead to the erosion of trust, as people become skeptical about the authenticity of voice recordings. As AI voice deepfake technology advances, it is crucial to address these ethical implications and implement safeguards to protect individuals’ privacy and prevent malicious use.

Detection and Prevention

The next step in addressing the ethical implications surrounding AI voice deepfake is to focus on the detection and prevention of this technology. As AI voice deepfake becomes more sophisticated and accessible, it is crucial to develop effective detection techniques to identify and mitigate the spread of manipulated audio content. One approach is to use machine learning algorithms to analyze and compare the acoustic features of real and fake voices, looking for inconsistencies and anomalies. Additionally, advancements in speaker verification technology can help detect potential deepfakes by examining unique vocal characteristics and patterns. Prevention is equally important, and it requires a multi-faceted approach. This includes implementing robust security measures to protect voice data, educating the public about AI voice deepfake risks, and promoting responsible use of AI technologies. By combining detection techniques with preventative measures, we can better safeguard against the harmful impacts of AI voice deepfake.

Frequently Asked Questions

How Can AI Voice Deepfake Technology Be Used in the EntertAInment Industry?

To enhance performances and improve dubbing, AI voice deepfake technology can be utilized in the entertainment industry. By leveraging this technology, actors can seamlessly mimic the voices of different characters, eliminating the need for multiple voice actors. This can save time and resources during the production process. Additionally, AI voice deepfake can be used to preserve the voices of aging or deceased actors, allowing their iconic performances to continue being enjoyed by audiences.

What Are the Ethical Concerns and Privacy Issues Related to AI Voice Deepfake?

When it comes to ethical concerns and privacy issues, you have to tread carefully. Imagine walking through a minefield, where each step can potentially explode and cause harm. The same goes for the world of AI voice deepfake. Ethical concerns arise due to the potential for misuse, manipulation, and deception. Privacy issues also come into play, as personal information can be manipulated and exploited. It’s crucial to address these concerns and establish strict regulations to protect individuals and maintain trust in the digital realm.

How Can AI Voice Deepfake Technology Enhance Cybersecurity?

Ai voice deepfake technology can enhance cybersecurity by enhancing authentication methods and detecting social engineering attacks. With this technology, organizations can implement more secure voice-based authentication systems that are difficult to deceive. Additionally, ai voice deepfake technology can help identify and prevent social engineering attacks, where malicious actors use manipulated voice recordings to deceive individuals into revealing sensitive information. By detecting these fake voices, cybersecurity measures can be strengthened, ensuring the protection of sensitive data and systems.

What Are the Legal Implications of AI Voice Deepfake?

The legal implications of AI voice deepfakes are significant. With the rise of this technology, lawmakers and regulators are faced with the challenge of creating effective and enforceable regulations. Legal consequences may include issues of consent, privacy, and intellectual property rights. Protecting individuals from harm and ensuring the authenticity of audio recordings are key concerns. Balancing the benefits of AI voice deepfakes with the need for proper regulation is crucial in order to mitigate potential risks and maintain trust in the technology.

How Can Individuals Protect Themselves From AI Voice Deepfake Attacks?

To protect yourself from AI voice deepfake attacks, there are various countermeasures you can employ. First, be cautious about sharing personal information online. Limit access to your voice recordings and avoid providing them to untrusted sources. Second, regularly update your security software and keep your devices patched to prevent unauthorized access. Lastly, be skeptical of unsolicited voice messages or calls that seem out of character. By taking these personal protection measures, you can reduce the risk of falling victim to AI voice deepfake attacks.

Conclusion

In conclusion, the rise of AI voice deepfake technology has brought both excitement and concern. While it offers the potential for realistic voice imitations and applications in the entertainment industry, it also raises legal implications and the need for individuals to protect themselves. As we navigate the complexities of AI voice deepfake, it is essential to stay informed and vigilant. By understanding the truth behind this technology, we can better engage with its implications and ensure its responsible use in the future.

- Voice Ai Elon Musk - March 25, 2024

- Tiktok Ai Voice Generator - March 24, 2024

- Zooey Deschanel (2) AI Voice - March 22, 2024